One month ago I wrote about the pornographic implications of recent progress in deep learning. I still agree with the core thesis of that piece (prompting is hard, things will really take off when we compose RL and large models) but my summaries of model accessibility and scaling economics are now outdated. One month is an entire lifetime in the machine learning field! In the interest of keeping everyone updated, I’d like to revisit these two topics and discuss some exciting new developments.

all your weights are belong to us

The most significant development in the accessibility of large models was StabilityAI’s announcement that they would release their Stable Diffusion image model to the public and allow anyone to run and fine-tune it on their own hardware. Or at least it was the most significant development until the model weights leaked early and 4chan users immediately went to work generating fetish porn.

Naturally this led to some media backlash and increased concern about deepfakes. To their credit, StabilityAI did not abandon their hands-off nsfw policy (“make it on your own GPUs”) but I suspect OpenAI and other companies will learn from this example and lock their models down even harder.

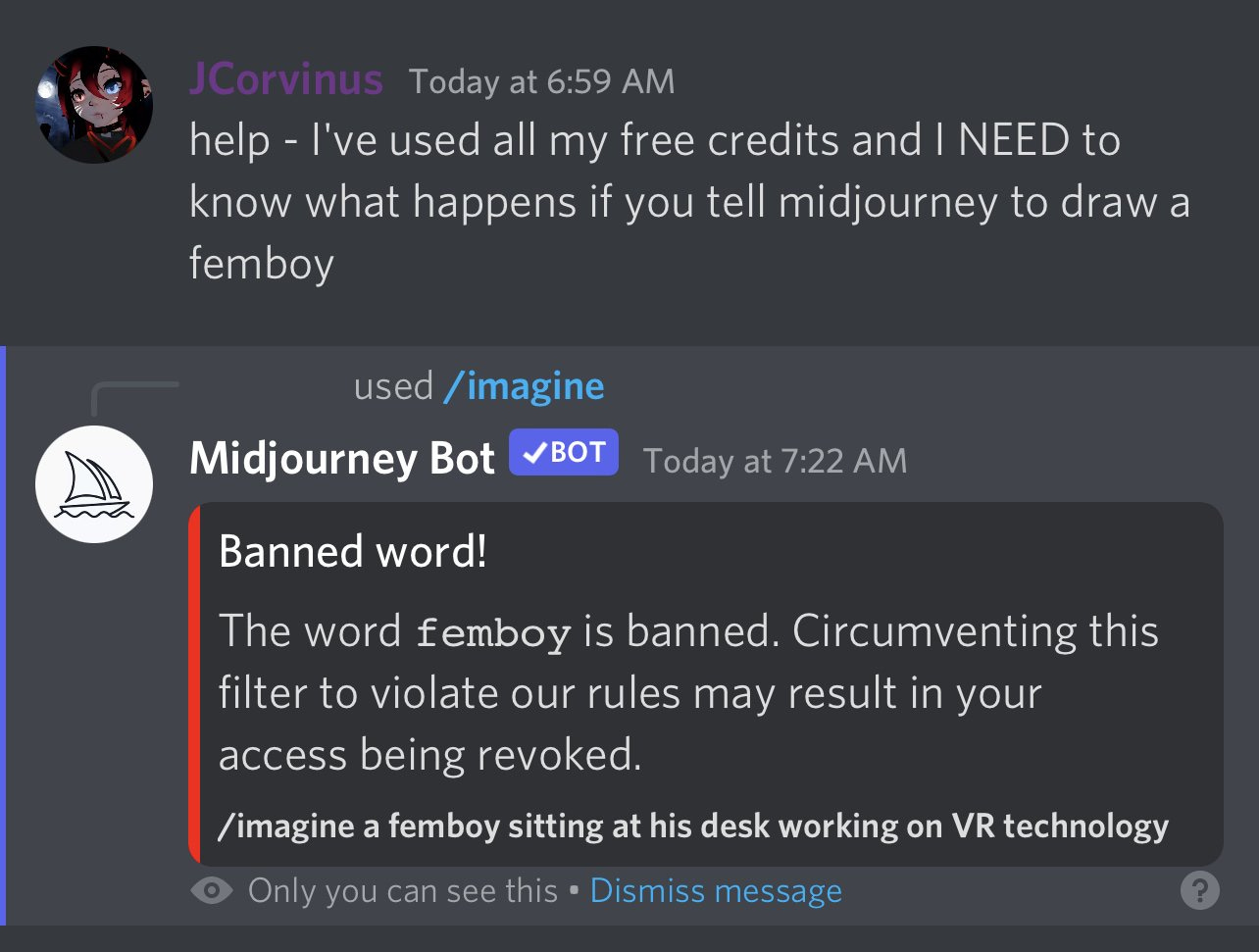

The anti-nsfw mitigations used today range from banning certain keywords to hilariously bad hacks, but the research community is attacking the problem at its roots (training datasets) and in a few years it may be difficult to generate porn with proprietary models. It’s also unlikely that the biggest industry players (OpenAI, Deepmind, etc) will release model weights like StabilityAI did.

Meanwhile, content platforms are trying to figure out how to react—reddit banned AI porn subreddits after the Stable Diffusion release, and OnlyFans will ban deepfakes:

TechCrunch reached out to one of the major adult content platforms, OnlyFans, who said that it would “continuously” update its technology to “address the latest threats to creator and fan safety, including deepfakes.”

“All content on OnlyFans is reviewed with state-of-the-art digital technologies and then manually reviewed by our trained human moderators to ensure that any person featured in the content is a verified OnlyFans creator, or that we have a valid release form,” an OnlyFans spokesperson said via email. “Any content which we suspect may be a deepfake is deactivated.”

This approach might work for sites which already have stringent verification processes, but in general an arms race between deepfake detection and deepfake generation can only end in a victory for the porn makers—as we learned from GANs, a model which can detect AI-generated outputs is the most useful tool for improving those outputs. Unless political winds change and the anti-porn lobby wins some legislative victories, we may end up with AI-generated porn banned on social media platforms in name only, as identifying generated images will be infeasible in practice.

In spite of the backlash, the Stable Diffusion release was a huge victory for AI-generated porn, and it decisively demonstrated the power of fine-tuning. It’s only been two weeks since the weights leaked and there are already polished sites like pornpen.ai, which I hope will pique more interest in gooner community machine learning projects.

based and scalepilled

If proprietary models become completely sanitized, then the future of hyperporn is in open-source models. In my previous post I expressed some pessimism about the compute cost required to train image/video models from scratch. I am happy to report that the era of Kaplan scaling laws is over; long live the new Chinchilla scaling laws!

When the Chinchilla paper was released in March 2022, everyone realized that if these results are generalizable (1) current models are not compute-optimal and could be trained for a fraction of the cost (2) the next bottleneck on better models will be lack of good training data, not lack of compute. We now have a bunch of empirical results which support these conclusions—some examples of compute cost savings include StabilityAI revealing that Stable Diffusion’s compute cost was around $600,000 and MosaicML showing off some extremely cheap text models.

We’re already hitting training data limitations for recommender systems and models which write code, so researchers are experimenting with new techniques to automate data creation. It’s unclear if we’ll find similar tricks for text and images, as these don’t have the same clearly-defined evaluation criteria. Fortunately, video-generating models probably won’t be data-constrained—research suggests the same scaling laws still apply to video, and we have massive training sets available e.g. all of YouTube.

More generally, the machine learning community is now very focused on scaling laws, and scaling techniques have moved from academic backchannels to mainstream consensus to YouTube tutorials. Thus I’m broadly optimistic that we’ll see more optimizations, further lowering the training cost of open-source models. We also haven’t seen a repudiation of the scale maximalist thesis—models will keep improving as they get larger, at least for a while; scale is all you need.

news you can use

“This is all very cool but I’m just a regular person who likes jerking off and I barely passed high school math. Where do I fit into all of this?” — you, probably

Machine learning research progress is like weed or pornography—it doesn’t demand anything of you, you can simply hang around and vibe. You can play around with pornpen.ai and other AI porn sites as they appear, and if you want to get more hands-on you can follow a tutorial and fine-tune Stable Diffusion on your favorite fetish. Sit back, relax, and enjoy man-made horrors beyond your comprehension.

If you’re an adult content creator, the increased accessibility of large models creates an interesting predicament. Weird internet nerds are almost certainly fine-tuning image models on your content, and while AI art is still in a legal gray area you probably can’t stop them or protect your likeness. Professional pornstars already struggle with scam/copycat accounts on social media platforms, and too-cheap-to-meter deepfakes will exacerbate this problem. However, performers can use the same tools to automate content creation, which over time will become less and less distinguishable from a real shoot.

Remember also that it’s still early days for large machine learning models. Generated video is next and all of the imperfections in current-gen image models will get smoothed out with scale. Where things go from there is anyone’s guess! The future is bright, goonfriends. ✨