This is the age of miracles.

The invention of the transformer in 2017 has revolutionized the machine learning field. Machine learning models can now create striking images, write articles, do word problems, play strategy games, explain jokes, fold proteins, and so on. If your only exposure to this stuff is DALL-E mini memes, I encourage you to follow the links and go play with GPT-3 to see the magic for yourself. The DALL-E 2 subreddit is also fun.

Large transformer models are still an open research area but consumer software will follow—in a few years language models may be personal assistants and programmers, image models may be illustrators and animators, and ML tools will make scientific discoveries and draft legal documents.

There are reasons to be optimistic about future progress, too. Fully generated video is on the horizon. Models keep getting better as they get bigger and there’s no end in sight. It’s so hard to find diminishing returns that researchers joke about “scaling maximalism,” the idea that we can build artificial general intelligence simply by adding more computing power to existing models. Scale is all you need!

finding the fun, building the fun

If the porn applications of this research are not obvious, here are some things which are technically possible with current tools:

Briefly describe your deepest, darkest fantasy and use a language model to generate a professional-quality erotica story (or entire anthology) about it

Have that erotica read to you in your favorite pornstar’s voice

Convert that text into a newly-generated photoset, in full quality, with the ability to make suggestions or change anything you like

Erase any part of an image and have an image model fill in the blanks, say to undress your favorite celeb or put hentai tentacles around them or whatever

Peruse an infinte supply of waifus (or even anime waifus)

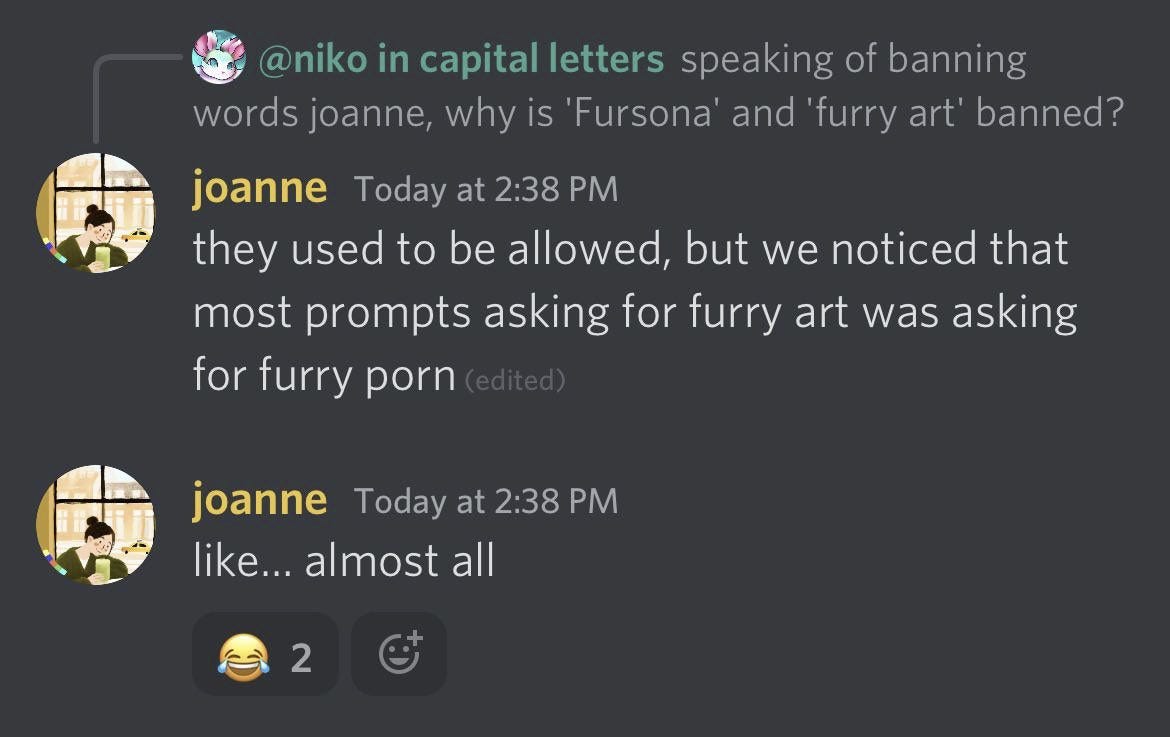

I say “technically possible” and not “you can do this right now” because today’s commercial tools have restrictions. GPT-3 doesn’t have much of a content filter, but voice-mimic products often require proof of identity and OpenAI works hard to prevent DALL-E 2 from drawing porn.

But the history of porn is the iterative process of finding new distribution channels to route around prudish gatekeepers. How will this play out for machine learning?

First, some background for the uninitiated: Today’s autoregressive transformer models are big neural networks which require lots of data (say, terabytes of text from the internet) and lots of computing power, usually on GPUs, to train the network. After the model is trained then it can be applied, such as by telling DALL-E 2 what image to create or asking GPT-3 to write an essay.

“Fine-tuning” is the process of taking a trained model and showing it a second, smaller dataset to make it specialized on that dataset. Fine-tuning is remarkably effective, e.g. one area of study is training language models on English text and fine-tuning them on code, and amazingly the fine-tuned models can solve complex programming challenges. If we could fine-tune a generic image model on porn images, it would become very good at generating porn images.

But OpenAI, Google, etc, are terrified of internet randos fine-tuning their models on naughty content, so they provide or sell interaction with the models (with nsfw content filters!) rather than releasing the fully trained models for the public to examine or fine-tune. For example, you can interact with DALL-E 2 through a website, but OpenAI will probably never fully release it.

We want to make porn. Can we say ‘screw the tech companies’ and train our own unconstrained models from scratch? Maybe. The bottleneck on model quality right now is access to datasets and cost of compute: According to rumors GPT-3 cost ~$10 million to train, and DALL-E 2 cost ~$1 million to train. There are open-source models like GPT-J 6B and DALL-E Mini, but they’re not as impressive as their proprietary counterparts.

Fortunately, training cost will decline as we discover more efficient training methods and as GPUs get better, which will open the door for amateur and community-funded projects. Of course, things would move faster if someone in the porn industry with deep pockets understood the potential of this technology and funded new models without content restrictions.

accelerate

“Ok, so in a few years pornified DALL-E will make me a photorealistic picture of Tommy King slurping a tentacle on Mars. Neat, but doesn’t change my life.” —you, probably

I think there’s a strong case that machine learning will go far beyond the obvious “draw a picture of my fantasy” usage and transform how we consume porn. The important thing to realize is that we’re not just in the early days of developing large models, we also barely understand how to leverage them.

Today the best models are autoregressive, i.e. they add to whatever input we give them, and we interact with them via prompts. We type some text into GPT-3 and it continues the paragraph, we type some text into DALL-E and it makes an image. This process exposes a lot of weird artifacts from the internals of the model—telling GPT-3 “let’s think step by step” makes it perform better on reasoning tasks, and you need to know a bunch of weird input tricks to get DALL-E to generate really striking images.

Prompt-writing is also bottlenecked by human creativity and expressivity—you need to visualize what you want and specify it in sufficient detail, and as your domme knows all too well, you’re not very good at that. We’ll better unlock the potential of autoregressive models when we apply other tools to automate this process.

Consider: You’re wearing a VR headset with an eye tracking sensor and you put on one of those splitscreen PMVs. The sensor trains a reinforcment learning model which sits on top of an image model. The RL model learns about your preferences from your eye movements and passes information to the image model, which generates new pics or gifs or short videos to seamlessly blend into the PMV. What kinds of images would this system generate?

General rule for reasoning about agents which are better than you in some domain: You can’t predict exactly what the agent will do, but you can predict it will achieve its goals. I can’t predict which chess moves AlphaZero will play, but I can predict it will checkmate my ass. Therefore I expect the VR setup to make weird and unnatural porn which is unbelievably hot and satisfying to view, actual visual crack.

The best-case scenario is these systems discover stimuli which elict a strong involuntary response, like a pleasurable version of tryptophobia images or a more potent form of ASMR. But even if this doesn’t pan out, they will at least produce unlimited erotic novelty. Imagine the feeling of seeing hentai for the first time, forever.

Of course, contextless arousal is just the beginning. Langauge models know a lot about the world, including the latent spaces of our sexuality. GPT-3 is already good at erotic roleplay, in the long term the same kind of knowledge will allow future models to invent fetishes, brainwash you into enjoying new experiences, and put you in all kinds of exciting mental states. It’s hard to imagine the upper bound of what is possible here, and that’s sort of the point—again, we can’t predict exactly what a more powerful optimization process will do, but we can be sure it will be Fun.

Initially only extremely online weirdos will appreciate this stuff. But as we know, innovations in advanced jerking off eventually make their way to the mainstream. The future is bright, goonfriends. ✨